16 KiB

Amazon EKS cluster management using kro & ACK

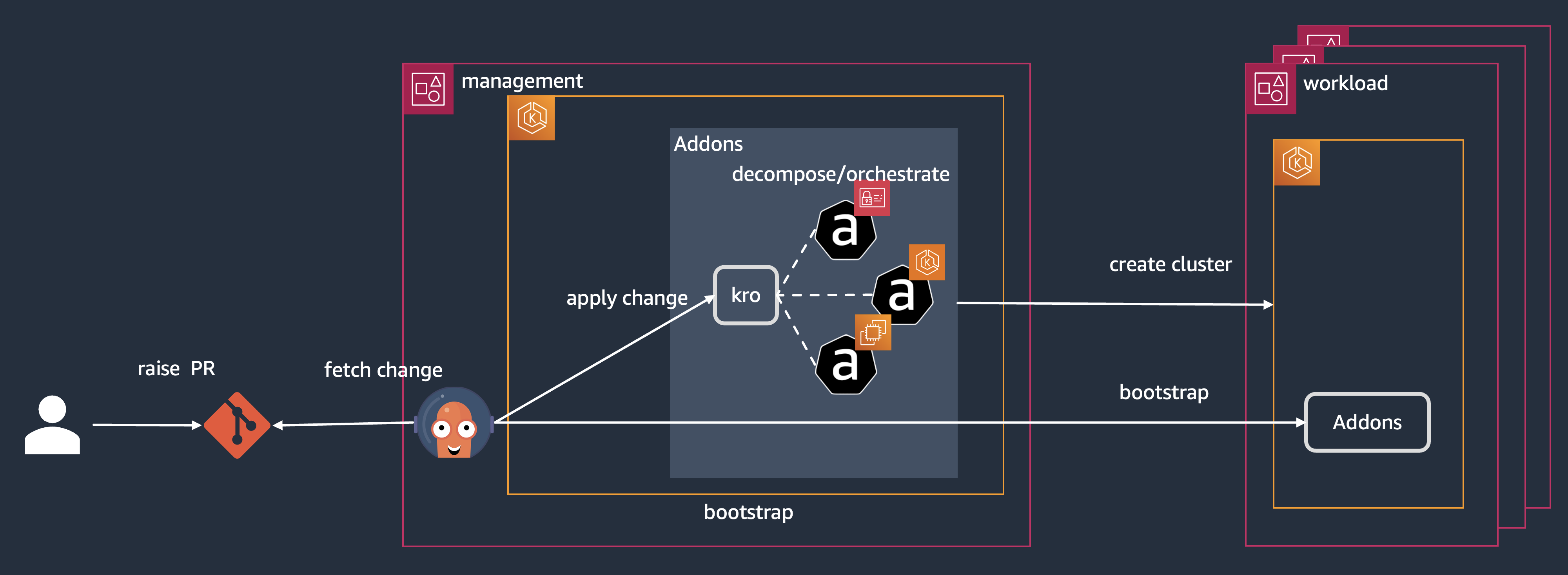

This example demonstrates how to manage a fleet of Amazon EKS clusters using kro, ACK (AWS Controllers for Kubernetes), and Argo CD across multiple regions and accounts. You'll learn how to create EKS clusters and bootstrap them with required add-ons.

The solution implements a hub-spoke model where a management cluster (hub) is created during initial setup, with EKS capabilities (kro, ACK and Argo CD) enabled for provisioning and bootstrapping workload clusters (spokes) via a GitOps flow.

Prerequisites

- AWS account for the management cluster, and optional AWS accounts for spoke clusters (management account can be reused for spokes)

- AWS IAM Identity Center (IdC) is enabled in the management account

- GitHub account and a valid GitHub Token

- GitHub cli

- Argo CD cli

- Terraform cli

- AWS cli v2.32.27+

Instructions

Configure workspace

-

Create variables

First, set these environment variables that typically don't need modification:

export KRO_REPO_URL="https://github.com/kubernetes-sigs/kro.git" export WORKING_REPO="eks-cluster-mgmt" # Try to keep this default name as it is referenced in terraform and gitops configs export TF_VAR_FILE="terraform.tfvars" # the name of terraform configuration file to useThen customize these variables for your specific environment:

export MGMT_ACCOUNT_ID=$(aws sts get-caller-identity --output text --query Account) # Or update to the AWS account to use for your management cluster export AWS_REGION="us-west-2" # change to your preferred region export WORKSPACE_PATH="$HOME" # the directory where repos will be cloned export GITHUB_ORG_NAME="iamahgoub" # your Github username or organization name -

Clone kro repository

git clone $KRO_REPO_URL $WORKSPACE_PATH/kro -

Create your working GitHub repository

Create a new repository using the GitHub CLI or through the GitHub website:

gh repo create $WORKING_REPO --private -

Clone the working empty git repository

gh repo clone $WORKING_REPO $WORKSPACE_PATH/$WORKING_REPO -

Populate the repository

cp -r $WORKSPACE_PATH/kro/examples/aws/eks-cluster-mgmt/* $WORKSPACE_PATH/$WORKING_REPO/ -

Update the Spoke accounts

If deploying EKS clusters across multiple AWS accounts, update the configuration below. Even for single account deployments, you must specify the AWS account for each namespace.

code $WORKSPACE_PATH/$WORKING_REPO/addons/tenants/tenant1/default/addons/multi-acct/values.yamlValues:

clusters: workload-cluster1: "012345678910" # AWS account for workload cluster 1 workload-cluster2: "123456789101" # AWS account for workload cluster 2Note: If you only want to use 1 AWS account, reuse the AWS account of your management cluster for the other workload clusters.

-

Add, Commit and Push changes

cd $WORKSPACE_PATH/$WORKING_REPO/ git status git add . git commit -s -m "initial commit" git push

Create the Management cluster

-

Update the terraform.tfvars with your values

Modify the terraform.tfvars file with your GitHub working repo details:

- Set

git_org_name - Update any

gitops_xxxvalues if you modified the proposed setup (git path, branch...) - Confirm

gitops_xxx_repo_nameis "eks-cluster-mgmt" (or update if modified) - Configure

accounts_idswith the list of AWS accounts for spoke clusters (use management account ID if creating spoke clusters in the same account)

# edit: terraform.tfvars code $WORKSPACE_PATH/$WORKING_REPO/terraform/hub/terraform.tfvars - Set

-

Log in to your AWS management account

Connect to your AWS management account using your preferred authentication method:

export AWS_PROFILE=management_account # use your own profile or ensure you're connected to the appropriate account -

Apply the terraform to create the management cluster:

cd $WORKSPACE_PATH/$WORKING_REPO/terraform/hub ./install.shReview the proposed changes and accept to deploy.

Note: EKS capabilities are not supported yet by Terraform AWS provider. So, we will create them manually using CLI commands.

-

Retrieve terraform outputs and set into environment variables:

export CLUSTER_NAME=$(terraform output -raw cluster_name) export ACK_CONTROLLER_ROLE_ARN=$(terraform output -raw ack_controller_role_arn) export KRO_CONTROLLER_ROLE_ARN=$(terraform output -raw kro_controller_role_arn) export ARGOCD_CONTROLLER_ROLE_ARN=$(terraform output -raw argocd_controller_role_arn) -

Create ACK capability

aws eks create-capability \ --region ${AWS_REGION} \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-ack \ --type ACK \ --role-arn ${ACK_CONTROLLER_ROLE_ARN} \ --delete-propagation-policy RETAIN -

Now, we need to create the Argo CD capability -- IdC has to be enabled in the management account for that. So before creating the capability let's store the IdC instance details and the user that will be used for accessing Argo CD in environment variables:

export IDC_INSTANCE_ARN='<replace with IdC instance ARN>' export IDC_USER_ID='<replace with IdC user id>' export IDC_REGION='<replace with IdC region>' -

Create Argo CD capability

aws eks create-capability \ --region ${AWS_REGION} \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-argocd \ --type ARGOCD \ --role-arn ${ARGOCD_CONTROLLER_ROLE_ARN} \ --delete-propagation-policy RETAIN \ --configuration '{ "argoCd": { "awsIdc": { "idcInstanceArn": "'${IDC_INSTANCE_ARN}'", "idcRegion": "'${IDC_REGION}'" }, "rbacRoleMappings": [{ "role": "ADMIN", "identities": [{ "id": "'${IDC_USER_ID}'", "type": "SSO_USER" }] }] } }' -

Create kro capability

aws eks create-capability \ --region ${AWS_REGION} \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-kro \ --type KRO \ --role-arn ${KRO_CONTROLLER_ROLE_ARN} \ --delete-propagation-policy RETAIN -

Make sure all the capabilities are now enabled by checking status using the console or the

describe-capabilitycommand. For example:aws eks describe-capability \ --region ${AWS_REGION} \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-argocd \ --query 'capability.status' \ --output textModify/run the commands above for other capabilities to make sure they are all

ACTIVE. -

Retrieve the ArgoCD server URL and log on using the user provided during the capability creation:

export ARGOCD_SERVER=$(aws eks describe-capability \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-argocd \ --query 'capability.configuration.argoCd.serverUrl' \ --output text \ --region ${AWS_REGION}) echo ${ARGOCD_SERVER} export ARGOCD_SERVER=${ARGOCD_SERVER#https://} -

Generate an account token from the Argo CD UI (Settings → Accounts → admin → Generate New Token), then set it as an environment variable:

export ARGOCD_AUTH_TOKEN="<your-token-here>" export ARGOCD_OPTS="--grpc-web" -

Configure GitHub repository access (if using private repository). We automate this process using the Argo CD CLI. You can also configure this in the Web interface under "Settings / Repositories"

export GITHUB_TOKEN="<your-token-here>" argocd repo add https://github.com/$GITHUB_ORG_NAME/$WORKING_REPO.git --username iamahgoub --password $GITHUB_TOKEN --upsert --name githubNote: If you encounter the error "Failed to load target state: failed to generate manifest for source 1 of 1: rpc error: code = Unknown desc = authentication required", verify your GitHub token settings.

-

Connect to the cluster

aws eks update-kubeconfig --name hub-cluster -

Install Argo CD App of App:

kubectl apply -f $WORKSPACE_PATH/$WORKING_REPO/terraform/hub/bootstrap/applicationsets.yaml

Bootstrap Spoke accounts

For the management cluster to create resources in the spoke AWS accounts, we need to create an IAM roles in the spoke accounts to be assumed by the ACK capability in the management account for that purpose.

Note: Even if you're only testing this in the management account, you still need to perform this procedure, replacing the list of spoke account numbers with the management account number.

We provide a script to help with that. You need to first connect to each of your spoke accounts and execute the script.

-

Log in to your AWS Spoke account

Connect to your AWS spoke account. This example uses specific profiles, but adapt this to your own setup:

export AWS_PROFILE=spoke_account1 # use your own profile or ensure you're connected to the appropriate account -

Execute the script to configure IAM roles

cd $WORKSPACE_PATH/$WORKING_REPO/scripts ./create_ack_workload_roles.sh

Repeat this step for each spoke account you want to use with the solution

Create a Spoke cluster

Update $WORKSPACE_PATH/$WORKING_REPO

-

Add cluster creation by kro

Edit the file:

code $WORKSPACE_PATH/$WORKING_REPO/fleet/kro-values/tenants/tenant1/kro-clusters/values.yamlConfigure the AWS accounts for management and spoke accounts:

workload-cluster1: managementAccountId: "012345678910" # replace with your management cluster AWS account ID accountId: "123456789101" # replace with your spoke workload cluster AWS account ID (can be the same) tenant: "tenant1" # We have only configured tenant1 in the repo. If you change it, you need to duplicate all tenant1 directories k8sVersion: "1.30" workloads: "true" # Set to true if you want to deploy the workloads namespaces and applications gitops: addonsRepoUrl: "https://github.com/XXXXX/eks-cluster-mgmt" # replace with your github account fleetRepoUrl: "https://github.com/XXXXX/eks-cluster-mgmt" platformRepoUrl: "https://github.com/XXXXX/eks-cluster-mgmt" workloadRepoUrl: "https://github.com/XXXXX/eks-cluster-mgmt" -

Add, Commit and Push

cd $WORKSPACE_PATH/$WORKING_REPO/ git status git add . git commit -s -m "initial commit" git push -

After some time, the cluster should be created in the spoke account.

kubectl get EksClusterwithvpcs -ANAMESPACE NAME STATE SYNCED AGE argocd workload-cluster1 ACTIVE True 36mIf you see

STATE=ERROR, this may be normal as it will take some time for all dependencies to be ready. Check the logs of kro and ACK controllers for possible configuration errors.You can also list resources created by kro to validate their status:

kubectl get vpcs.kro.run -A kubectl get vpcs.ec2.services.k8s.aws -A -o yaml # check for errorsIf you see errors, double-check the multi-cluster account settings and verify that IAM roles in both management and workload AWS accounts are properly configured.

When VPCs are ready, check EKS resources:

kubectl get eksclusters.kro.run -A kubectl get clusters.eks.services.k8s.aws -A -o yaml # Check for errors -

Connect to the spoke cluster

export AWS_PROFILE=spoke_account1 # use your own profile or ensure you're connected to the appropriate accountGet kubectl configuration (update name and region if needed):

aws eks update-kubeconfig --name workload-cluster1 --region us-west-2View deployed resources:

kubectl get pods -AOutput:

NAMESPACE NAME READY STATUS RESTARTS AGE external-secrets external-secrets-679b98f996-74lsb 1/1 Running 0 70s external-secrets external-secrets-cert-controller-556d7f95c5-h5nvq 1/1 Running 0 70s external-secrets external-secrets-webhook-7b456d589f-6bjzr 1/1 Running 0 70sThis output shows that our GitOps solution has successfully deployed our addons in the cluster

You can repeat these steps for any additional clusters you want to manage.

Each cluster is created by its kro RGD, deployed to AWS using ACK controllers, and then automatically registered to Argo CD which can install addons and workloads automatically.

Conclusion

This solution demonstrates a powerful way to manage multiple EKS clusters across different AWS accounts and regions using three key components:

-

kro (Kubernetes Resource Orchestrator)

- Manages complex multi-resource deployments

- Handles dependencies between resources

- Provides a declarative way to define EKS clusters and their requirements

-

AWS Controllers for Kubernetes (ACK)

- Enables native AWS resource management from within Kubernetes

- Supports cross-account operations through namespace isolation

- Manages AWS resources like VPCs, IAM roles, and EKS clusters

-

Argo CD

- Implements GitOps practices for cluster configuration

- Automatically bootstraps new clusters with required add-ons

- Manages workload deployments across the cluster fleet

Key benefits of this architecture:

- Scalability: Easily add new clusters by updating Git configuration

- Consistency: Ensures uniform configuration across all clusters

- Automation: Reduces manual intervention in cluster lifecycle management

- Separation of Concerns: Clear distinction between infrastructure and application management

- Audit Trail: All changes are tracked through Git history

- Multi-Account Support: Secure isolation between different environments or business units

To expand this solution, you can:

- Add more clusters by replicating the configuration pattern

- Customize add-ons and workloads per cluster

- Implement different configurations for different environments (dev, staging, prod)

- Add monitoring and logging solutions across the cluster fleet

- Implement cluster upgrade strategies using the same tooling

The combination of kro, ACK, and Argo CD provides a robust, scalable, and maintainable approach to EKS cluster fleet management.

Clean-up

-

Delete the workload clusters by editing the following file:

code $WORKSPACE_PATH/$WORKING_REPO/fleet/kro-values/tenants/tenant1/kro-clusters/values.yamlIn the Argo CD UI, synchronize the cluster Applicationset with the prune option enabled, or use the CLI:

argocd app sync clusters --pruneKnown issue: We noticed that some of the VPCs resources (route tables) do not get properly deleted when workload clusters are removed from manifests. If this occurred to you, delete the VPC resources manually to allow for the clean-up to complete till the issue is identified/resolved.

-

Delete Argo CD App of App:

kubectl delete -f $WORKSPACE_PATH/$WORKING_REPO/terraform/hub/bootstrap/applicationsets.yaml -

Delete the EKS capabilities on the management cluster

aws eks delete-capability \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-argocd aws eks delete-capability \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-kro aws eks delete-capability \ --cluster-name ${CLUSTER_NAME} \ --capability-name ${CLUSTER_NAME}-ack -

Make sure all the capabilities are deleted by checking the console or using the

describe-capabilitycommand. For example: -

Delete Management Cluster

After successfully de-registering all spoke accounts, remove the workload cluster created with Terraform:

cd $WORKSPACE_PATH/$WORKING_REPO/terraform/hub ./destroy.sh -

Remove ACK IAM Roles in workload accounts

Finally, connect to each workload account and delete the IAM roles and policies created initially:

cd $WORKSPACE_PATH/$WORKING_REPO/ ./scripts/delete_ack_workload_roles.sh ack